Creating Jenkins pipelines with Ansible, part 2

The job-dsl and Pipeline plugins

This is a continuation of the previous post. For each project there are only two things we want to do.

- Check out the source code.

- Run the pipeline in the Jenkinsfile at the root of the repository.

This can be accomplished with the job-dsl plugin. It takes a definition of Jenkins jobs we want, and creates or updates them, as necessary. Somewhat confusingly, job-dsl itself needs to be run from a Jenkins job, known as a seed job.

The only reason for this job to exist is so that job-dsl can run, and every other job should be created with job-dsl. Yes, it is a bit mind bending, but if you don't think of it as a job but as a script which you run from Jenkins it gets easier.

In our case, every other job will look the same. They'll all be Pipeline jobs which run a Jenkinsfile after checking out a git repository from the same git host.

{% for repository in jenkins_git_repositories %}

pipelineJob('{{ repository }}') {

definition {

cpsScm {

scm {

git {

remote {

url('{{ jenkins_git_user }}@{{ jenkins_git_host }}:' +

'{{ jenkins_git_path }}/{{ repository }}.git')

}

branch('master')

}

}

scriptPath('Jenkinsfile')

}

}

}

{% endfor %}

First we need to check if the seed job has already been created.

- name: Get list of jobs

uri: url="http://127.0.0.1:8080/api/json?tree=jobs[name]" return_content=yes

register: jobs

- name: Check if seed job exists

set_fact:

seed_exists: "{{ seed_name in jobs.json.jobs|map(attribute='name')|list }}"

We'll create the seed job if it doesn't exist. If it exists, we'll update its configuration (omitted here, but you can see how in the source) to ensure that it is what it should be. When we run the seed job it will remove jobs for repositories that we've removed from the list and create jobs for repositories that we've added.

- name: Create seed job

uri:

url: "http://127.0.0.1:8080/createItem?name={{ seed_name }}"

method: POST

HEADER_Content-Type: application/xml

body: "{{ lookup('template', jenkins_seed_template) }}"

register: jenkins_seed_updated

when: not seed_exists

- name: Run seed job

uri:

url: "http://127.0.0.1:8080/job/{{ seed_name }}/build"

method: POST

status_code: 201

when: jenkins_seed_updated|success

This assumes all your git repositories are on the same host and under the same path, with Jenkins using the same username for all of them. You can change the template to take a list of dictionaries which include relevant settings if you need more flexibility, but otherwise all you need to do is list your repositories when applying the role.

---

- hosts: localhost

roles:

- name: jenkins-pipeline

jenkins_admin_pass: use-a-vault-variable-for-this

jenkins_ssh_private_key: jenkins-id_rsa

jenkins_ssh_public_key: jenkins-id_rsa.pub

jenkins_git_user: git

jenkins_git_host: zeus.wjoel.com

jenkins_git_path: git

jenkins_git_repositories:

- wjoel.com

When you create a new repository you only need to add a single line to that list and run Ansible after you've committed your Jenkinsfile.

A Jenkinsfile for generating static websites

This site is built using Nikola, a static website generator written in

Python, and its plugin to use Org mode for GNU Emacs. The repository contains

little more than some .org files, which make up the posts, and some

configuration and CSS styling. We'll add a Jenkinsfile to

- Create a Python virtual environment.

- Install a fixed version Nikola in this virtual environment.

- Build the site using Nikola, which outputs a directory containing the site.

- Create a

.tar.gzarchive containing the output. - Upload the archive to a server, for permanent storage.

- Download and unpack the archive to an internal "staging" web server.

- Do the same deployment to

wjoel.comif staging looks fine.

Pipeline scripts are written in Groovy, but a lot of things are restricted by Jenkins, including certain substring operations. I seem to be better off avoiding Groovy by using the shell to do as much as possible (the output of which you can't get back into Groovy in any reasonable way, by the way). I'd usually put scripts in separate files, but to keep everything in the Jenkinsfile I'll write the scripts here in strings.

First we use node to say that we want to run on some build machine.

It's possible to restrict the node to be of a certain type, like a 64-bit

Linux server or a 32-bit Windows server, but there's no need for that with

just a single Jenkins server doing everything.

Each stage defines a separate step in our pipeline.

node {

stage 'Checkout source'

checkout scm

def artifact = "\$(date +%Y-%m-%d)-\$(git rev-parse --short HEAD)-wjoel.com.tar.gz"

We'll assume that the packages required by Nikola have already been installed, but we need to set up a Python virtual environment and install Nikola and the Org mode plugin. We also copy a configuration file for the plugin, included in the git repository, to the right location.

Finally, we combine the current date with the short git hash to create a unique

artifact name. We include all output from Nikola, which lives in the output

directory.

stage 'Build site'

sh """pyvenv nikola

. nikola/bin/activate

pip3 install wheel==0.29.0 Nikola==7.7.12 webassets==0.11.1

nikola plugin -i orgmode

cp init.el plugins/orgmode/init.el

nikola build

cd output && tar czf ../${artifact} *"""

Uploading the artifact is easy with scp, but requires that Jenkins

is allowed to login and that the destination is in known_hosts.

stage 'Upload artifact'

sh "ssh zeus.wjoel.com mkdir -p artifacts/wjoel.com"

sh "scp ${artifact} zeus.wjoel.com:artifacts/wjoel.com/"

Before deploying to production we use input to ask for human approval.

stage 'Deploy to staging'

def deployCommand = """

ssh zeus.wjoel.com scp zeus.wjoel.com:artifacts/wjoel.com/${artifact} /tmp

ssh zeus.wjoel.com tar xf /tmp/${artifact} --group www-data -C /usr/share/nginx/www"""

sh "${deployCommand}-staging"

stage 'Deploy to production'

input 'Deploy to production?'

sh "${deployCommand}"

sh "ssh zeus.wjoel.com rm /tmp/${artifact}"

}

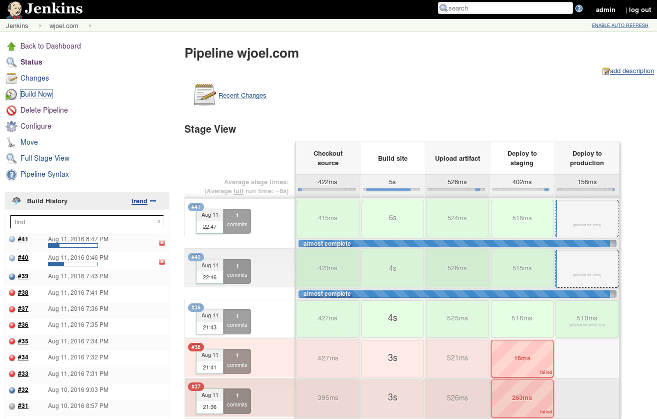

Here's what the pipeline looks like. Concurrent builds get separate workspaces, as build directories are called in Jenkins. When that happens the "Build site" stage takes a bit longer since the Python dependencies need to be downloaded. All stages share the same workspace. For this Jenkinsfile it would not be an issue if each stage ran on a different node, since the only file shared between stages is the artifact archive, which is uploaded to a shared server.

You can do a lot of interesting things with parallel builds and so on with Pipeline but the point I'm trying to make is that, despite the number of Ansible lines in these posts, it's easy to get started with Jenkins. You can make use of it for your own projects, whether they are public or private, once you have Jenkins installed and configured.

In my opinion it makes a lot of sense to ignore all the plugins and legacy of Jenkins and use it as a simple and solid automation tool for building, testing, and deploying things. Even static websites.